-

1. Re: Question About Forks and Modeling Rework Requests in jbpm4

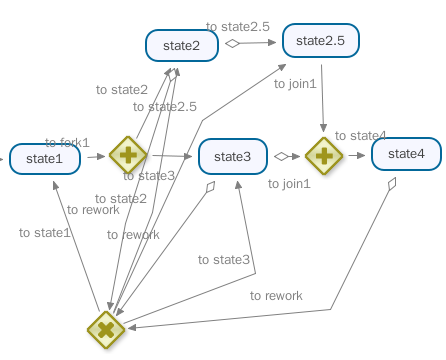

rmoskal Mar 12, 2010 6:14 PM (in response to rmoskal)I've done a bit more research on this forking behavior and decided to test against the 4.4 snap-shot as well as against 4.1. Mainly to see whats' changed. I simplified my process definition a little:

I thought I would implement the use case that would work for my client. When we get to state2 and state3 and we signal state2 to return to state1, we would simply end the state3 execution. When I did this under 4.4 some unexpected things happened, but some mysteries were cleared up from 4.1. The entire test case is attached (in case anyone wants to experiment), but the important part is here:

//Lets' end the bottom execution

ExecutionImpl bottomPath = (ExecutionImpl) processInstance.findActiveExecutionIn("state3");

bottomPath.end();

assertTrue(bottomPath.isEnded());

ExecutionImpl p= (ExecutionImpl)executionService.findExecutionById(processInstance.getId());

assertNull(p.getActivityName()); //In 4.1 this was "state1"

Collection<ExecutionImpl> childExecutions = p.getExecutions();

assertEquals(2,childExecutions.size()); //In 4.1 this was 1 the only item on the colllection was "state1"

Iterator<ExecutionImpl> i = childExecutions.iterator();

ExecutionImpl o =i.next();

assertEquals("state1",o.getActivityName());

ExecutionImpl o2 = i.next();

assertEquals("state3",o2.getActivityName());

assertEquals(bottomPath.getId(),o2.getId()); //They are the same process

assertFalse(o2.isEnded()); //But now it is not ended!

executionService.signalExecutionById(o.getId(),"to fork1");As you can see the execution for state3 seems ended, but then later when I query the executions for the top-level process it comes back and appears not to be ended after all (Under 4.1 things happen differently, the state3 execution is not returned by getExecutions and state1 is the activity name for the top level process instead of null). So it's no surpise that I get a jdbc constraint error on moving through the fork again. state2 is executed, but not state3:

17:41:46,222 FIN | [ExecuteActivity] execution[ForkAssumptionTest.4.to state2] executes activity(state2)

17:41:46,229 WRN | [JDBCExceptionReporter] SQL Error: -104, SQLState: 23000

17:41:46,229 SEV | [JDBCExceptionReporter] Violation of unique constraint $$: duplicate value(s) for column(s) $$: SYS_CT_56 in statement [update JBPM4_EXECUTION set DBVERSION_=?, ACTIVITYNAME_=?, PROCDEFID_=?, HASVARS_=?, NAME_=?, KEY_=?, ID_=?, STATE_=?, SUSPHISTSTATE_=?, PRIORITY_=?, HISACTINST_=?, PARENT_=?, INSTANCE_=?, SUPEREXEC_=?, SUBPROCINST_=? where DBID_=? and DBVERSION_=?]

### EXCEPTION ###########################################I'm curious as to whether I'm going about ending an execution correctly and whether this will get me the rework (looping or back-tracking behavior) I'm looking for.

Thanks again!

Robert

-

ReworkPublicTest.java.zip 1.2 KB

-

-

2. Re: Question About Forks and Modeling Rework Requests in jbpm4

kukeltje Mar 13, 2010 3:54 AM (in response to rmoskal)1 of 1 people found this helpfulI'll need to have a more detailed look the coming days since you are realy going boldly where no man has gone before..., so please be patient.... But it is a kind of nice new challenge in un structured processes

cheers,

Ronald

-

3. Re: Question About Forks and Modeling Rework Requests in jbpm4

kukeltje Mar 13, 2010 6:40 AM (in response to rmoskal)1 of 1 people found this helpfulRobert,

I'm looking at your processdefinition but have some questions/remarks:

- What is it you want to have reworked e.g. rework 2 when you are in 3? or both 2 and 3 (via 1) when you are in 3 or...

- What is the full functionality you want to achieve (related to 1)

- It seems/looks to me you have over generalized the flow in a way that it is hard to see what will happen and everything can influence other things.

- ...

Cheers,

Ronald

[edited]

-

4. Re: Question About Forks and Modeling Rework Requests in jbpm4

kukeltje Mar 13, 2010 8:36 AM (in response to rmoskal)And you use executionImpl.end().... wrong too, it's a non-public class. Do you want to end an execution in one of the 'legs' of the fork like you mentioned? Then add another join and set mulitplicity to 1. That will end the other legs of a fork. -

5. Re: Question About Forks and Modeling Rework Requests in jbpm4

rmoskal Mar 13, 2010 9:35 AM (in response to kukeltje)Ron:

Thanks for responding, let me draw out a slightly simpler process (what I call a pipeline) and I think my needs and my client's use cases will become clear.

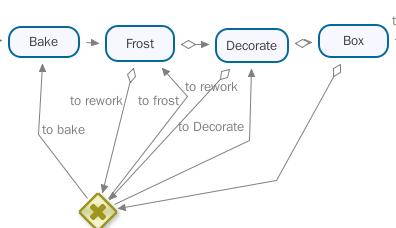

Take a look this work flow for making cakes:

At any step in the process someone may discover that something went wrong and the cake has to go back for "rework". At any point past baking, another department may find a flaw in the baking and may send it back. The decorators may find that the frosting is imperfect and may send it back there. Once a cake is sent back in the pipeline it travels forward through all the steps again (even if nothing needs to be done, the department at least has to sign off on the cake). I modelled it the above way to avoid having to connect every activity to every activity . Every activity that can initiate a rework request has an outgoing transition to a common decision and the decision node has an outgoing transition to every node that can be a target. So you notice tha bakers can only be the target of a rework request and the boxers can only initiate them.

Really this is meant to give my client an overarching view of what is going on in their shop (the actual activity nodes are subprocesses of arbitrary complexity). These single path pipelines works fine inside of jbpm. The problem arose when we discovered that certain activities could go on in parallel (sorry my imagination fails me with regard to extending the example).

In fact all of the other use cases I've come up with work for pipelines with forks. The only time it fails is if you fork then try to transition back to before the fork from one of the branches. Not knowing so much about the internals I figured the thing to do was to kill the execution along the other branch.

Hope this helps. I am appreciative of your time.

Regards,

Robert

-

6. Re: Question About Forks and Modeling Rework Requests in jbpm4

kukeltje Mar 13, 2010 10:36 AM (in response to rmoskal)I got it this far... as you mentioned, it becomes difficult when adding forks/joins. That is what I am curious about... what should happen to the other leg in a fork if you transition to a node before the fork like you mention in your last paragraph -

7. Re: Question About Forks and Modeling Rework Requests in jbpm4

rmoskal Mar 13, 2010 10:54 AM (in response to kukeltje)Let's say there was a parallel activity to frosting the cake. If we requested a rework from frosting to baking we would have to take the fork and do both frosting and this other activity again. Another way I want to say this is that if one branch after a fork goes back, they all must.

I was thinking of this in terms simply ending the execution on the other fork. Thats' what I was trying to do in the test case I attached. I send a signal to state2 to take the rework path to state1 and then I tried to track the down and kill the execution for state3.