-

1. Re: Clustering Modeshape EC2

rhauch Dec 8, 2014 10:33 AM (in response to agonist)1 of 1 people found this helpfulI was hoping that someone with more EC2 experience would be able to help.

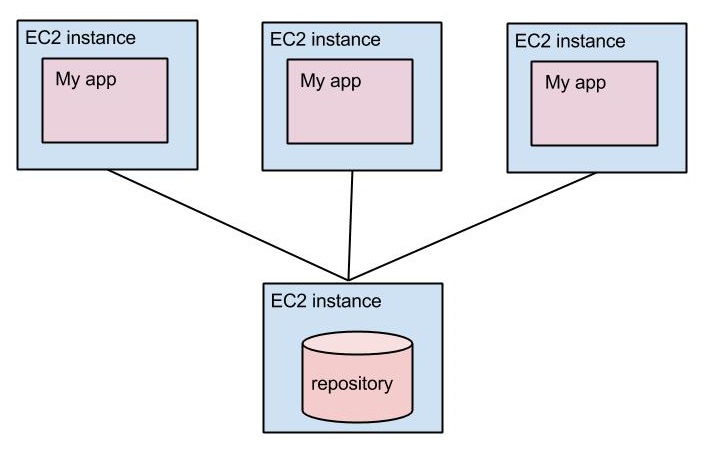

Are you planning on running ModeShape in all EC2 instances? If not, is the EC2 instance shown in the diagram as having the repository really running multiple repository instances? If so, then yes these need to be clustered. If not, then I'm curious why you've configured clustering.

I understand the things with S3 to detect each EC2 instance, but now there is something mysterious for me. Where my persistent store supposed to be located ? inside a different EC2 instance which contains only the persistent store ? Also, in this kind of configuration, is this better to have a persistent store in filesystem format or in mysql database ?

Your persistent store can be local to the same EC2 instance(s) where the repository is running, but it also can be on another EC2 instance as long as the repository can see/access it. For example, if you are using MySQL, then the MySQL database can be anywhere as long as the JDBC cache store configured in your repository can connect to the repository. There may be some performance benefit to having it in the same EC2 instance, but that's something you'll have to investigate. BTW, if you want the storage to be in a different EC2 instance than where ModeShape is running, then MySQL may be the only effective option.

When persisting the content in the same EC2 instance as the repository is running, then both the filesystem and MySQL should theoretically work on EC2. If you're using ModeShape 4 (with Infinispan 6), then you might also consider LevelDB cache store. Regardless, you'll have to evaluate the performance, durability guarantees, and other characteristics between these options.

How to configure this in my configuration ?

Configuring the cache store is straightforward. Your configuration already uses the file cache store (in your Infinispan config file). As for other cache stores, look at our examples (here's one that uses MySQL, though it also uses OPTIMISTIC locking while I'd strongly suggest using PESSIMISTIC).

-

2. Re: Clustering Modeshape with EC2

ma6rl Dec 8, 2014 5:02 PM (in response to agonist)1 of 1 people found this helpfulWe are currently running a ModeShape Cluster on EC2.

We currently run our Modeshape instances inside Wildfly using the Modeshape sub-system so our configuration is done via the sub-system XML but the principles are the same.

Each EC2 instance in the cluster has our application deployed on it along with the Modeshape sub-system (but the same principles should apply if your running Modeshape embedded in your application).

Each deployment is configured to use the same Infinispan cache container with a replicated cache. The replicated cache is configured to use a shared cache store, in our case a MySQL database running in RDS. This is done using a Infinispan JDBC Store (we use the string keyed store).

We use the JGroups S3Ping protocol which allows new instances to register/deregister and be discovered without using Multicast which is not supported in AWS.

What this means in practise is each instance has it's own copy of the the repository cached in memory (via Infinispan) but share a single persisted cache store stored in a MySQL database. Wrties to a node result in the changes being replicated to each of the instances caches as well as persisting the changes in the database. Currently the size of the repsoitory in memeory hasn't required us to start evicting entries from memory but we will be looking at that shortly.

Our application is capable of peforming multiple concurrent updates to the same JCR nodes which means we need to use a fairly aggressive locking stratergy which includes:

- Synchronous replication

- NON_XA PESSIMISTIC transactions

- READ_COMMITTED locking with stripping disabled

With the above configuration we haven been able to automatically scale our EC2 instances from 2 (minimum) to 10 (maximum) under simutaled load without any issues.

I have not attemtped to use Invalidation Replication as it does not seem like an ideal fit for our application, instead we are starting to investigate Distributed Replication with the goal of being able to scale to the 100's of nodes as required.

-

3. Re: Clustering Modeshape with EC2

agonist Dec 10, 2014 5:43 AM (in response to agonist)Thank's for your answers it helps, for the moment I succeffully connected my application to a RDS mysql db, so I'll continue to test. But it look to works fine.