File handle (pipe/selector) pseudo-leak

gjaekel Nov 29, 2019 5:23 AMI'm faced with a "pseudo-leak" of . Handles are "lost" in triples of two pipes and one epoll selector. From this and other hints it seems to be spawned by Java NIO Channels used by the XNIO module. Over some hours, the usage of filehandes grows to thousands.

I discovered that the handles are released (at least) by invoking a Full GC. I'm using a well-tuned Java8-JVM with G1GC enabled, and as a enjoyable result a Full GC happens very seldom. But as a negative consequence, it will consume some thousands of this FH triples in the meanwhile. Because the handles are releasable, it's not a real leak but an effect of a Soft/Weak/Phantom-Reference.

And have reached the assigned OS limit (the JVM with Wildfly runs inside a LX-Container) twice last week. Therefore, as a first workaround for production, I wrote a watchdog which invoke a FGC using jcmd if the level of pipe handles rise a limit.

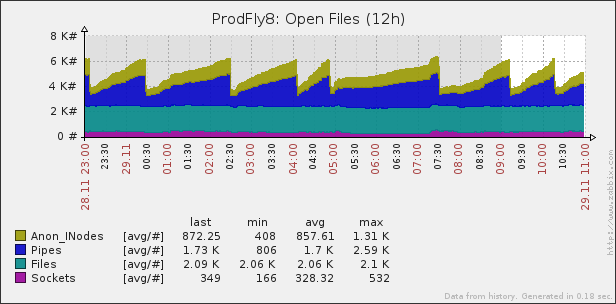

Here a graph of the typical behaviour; with a limit of 2500 pipes the watchdog invokes a FullGC.

This is watched on a (balanced) pair of Wildfly-13 running about >20 applications. It don't seem to be related to a concrete application because it also happens (on both Wildfly of the pair) if I disable single applications from load balancing (on one of the pair).

It don't "show up" on other (pairs) of our Wildflies, but there is another set of application with other usecases. There is more memory circulation and more "pressure" on the heap. Maybe this will trigger the contemporary release of the objects holding the filehandles in another way.

I already limit the number of threads to 256 without effects.

/subsystem=io/worker=default:read-resource(include-runtime)

{

"outcome" => "success",

"result" => {

"busy-task-thread-count" => 15,

"core-pool-size" => 2,

"io-thread-count" => 80,

"io-threads" => "80",

"max-pool-size" => 256,

"queue-size" => 0,

"shutdown-requested" => false,

"stack-size" => "0",

"task-core-threads" => "2",

"task-keepalive" => "60000",

"task-max-threads" => "256",

"outbound-bind-address" => undefined,

"server" => {

"/10.69.62.38:28009" => undefined,

"/10.69.62.38:28080" => undefined

}

}

}

Is this a know bug of XNIO? Is it already fixed?

P.S.: I found a thread with similar observations, see Possible file handle leak in WildFly 12+