Current RHQ has a pretty powerful authorization mechanism - which people nowadays would call "RBAC" or Role Based Access Control.

[ Please note this post is not about discussing the mentioned RABC scheme; RBAC is described to illustrate the proposal. The discussion of RBAC for RHQ.next will be a separate document ]

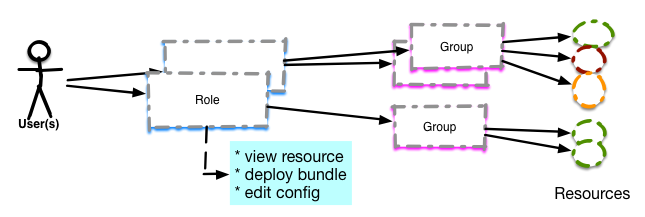

Users are associated to roles and the roles are associated to (Resource) groups, which then contain the actual resources

Each Role has a set of permissions, which then apply to the resources of the group.

In above illustration you can see two kinds of groups:

- The one with the colored circles is a so called mixed group, that is mostly used for permission purposes to limit what resources a user can see and use

- The one below is a so called compatible group, that most often serves to apply operations to all of the resources, which have the same resource type (and therefore are compatible). Other uses are group monitoring (charting + alerting) or provisioning to all members of the group

So why is that not enough?

We have seen several situations where

- A service provider is monitoring infrastructure of several clients using RHQ and also wants to give clients access to their resources only. It is doable via grouping above, but certainly does not allow for self-service

- Several departments inside ACME corporation want their resources monitored but not set up their own RHQ server

- Departments inside ACME corp want their own pre-sets ("templates" in RHQ speak) for alert definitions or collection intervals

- Departments inside ACME corp should not see resources of other departments unless explicitly allowed

Proposal

To cater for the above needs I propose to tenants to RHQ.next.

- By default there will always be one default tenant and all users and resources belong to that tenant. The situation would by as today.

- On top of that it should be possible to create tenants, that get their own tenant id and the possibility to assign a tenant-specific super user (the default rhqadmin super duper user would stay)

- New users in the system can then be added to individual tenants and would by default only be able to see objects of that tenant.

- It may be possible to explicitly grant view (more?) rights on objects to users of other tenants

- It may even be possible to add a user to multiple tenants and/or groups within other tenants to allow for the admin on pager duty to see system state outside the own tenant without being the super duper user.

- Agents will report data to exactly one tenant; their configuration will need to be enriched by a tenant specific token.

- All operations like resource search, tree/graph traversal etc. will be limited to the objects of the local tenant

- Alert templates / metric collection interval templates will exist globally as super-default. Each tenant will get the same set of templates that are inherited and copy-on-write. Those can then be refined as today by group-specific and individual settings.

For user credentials we will have a tuple <tenantid, userid<tenantid>,password>. For the login dialog we will need to decide if we want to encode the tenant in the user name e.g. "heiko.acme" and "heiko.redhat" or if we want to have an explicit list of tenants to choose from. As many users are using LDAP for user identification and SSO, the explicit tenant id, which is not encoded in the user name will likely be preferred.

We need to also decide how much nesting of tenants is needed. There may be situations where one tenant is e.g. the ecommerce-platform group, which is taking care of all e-commerce sites within ACME and where subtenants could be each individual e-commerce-app.