-

1. Re: Best Practices to insert millions of records (Batch Insert)

smunirat-redhat.com Jan 13, 2016 1:37 AM (in response to kkrishnashankar)My assumption is that you are creating a map / or object for single inserts , if that is true try to aggregate all the Maps to a List and then try inserting using batch=true on the sql.

sql:query?batch=true , this should improve the performance , also you can split the list in to batches and do a parallel process

-

2. Re: Best Practices to insert millions of records (Batch Insert)

kkrishnashankar Jan 13, 2016 9:28 AM (in response to smunirat-redhat.com)thanks for reply, followed the similar steps.

used parallel processing and batch=true.

processing time came down to less than min (30- 45 seconds.)

-

3. Re: Best Practices to insert millions of records (Batch Insert)

kkrishnashankar Jan 15, 2016 12:03 PM (in response to smunirat-redhat.com)Are you referring to process Aggregate? not sure how its helps to reducing processing time.

Actually tried but not significant change.

thanks

Krishna

-

4. Re: Best Practices to insert millions of records (Batch Insert)

smunirat-redhat.com Jan 15, 2016 12:23 PM (in response to kkrishnashankar)I did not understand , did the batch parameter bring down the processing time or not ? Your answers seem to be conflicting.

-

5. Re: Best Practices to insert millions of records (Batch Insert)

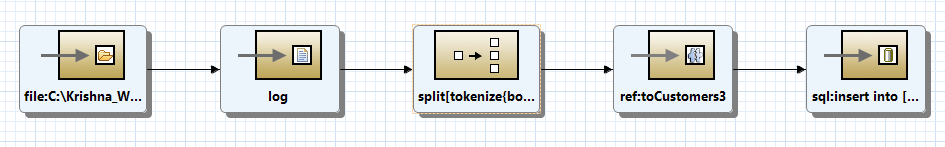

kkrishnashankar Jan 15, 2016 12:59 PM (in response to smunirat-redhat.com)enabled batch in sql component and in split enabled parallel processing

Am I clear?

-

6. Re: Best Practices to insert millions of records (Batch Insert)

kkrishnashankar Jan 15, 2016 1:05 PM (in response to smunirat-redhat.com)If you have any any pdf/docs show casing EIP patterns and use cases, pls share.

It would be great help.

-

7. Re: Best Practices to insert millions of records (Batch Insert)

smunirat-redhat.com Jan 15, 2016 4:51 PM (in response to kkrishnashankar)I dont know if there is a documented best practise for this , but we can use Splitter EIP with file to achieve a better performance , can you try something like below

<camelContext trace="false"

xmlns="http://camel.apache.org/schema/spring" >

<propertyPlaceholder id="placeholder" location="classpath:sql.properties" />

<camel:dataFormats>

<camel:jaxb contextPath="com.sundar" id="orderConv" partClass="com.sundar.Order"/>

</camel:dataFormats>

<camel:route>

<camel:from uri="file:///Users/smunirat/apps/myfile"></camel:from>

<camel:threads poolSize="10" customId="true">

<camel:split streaming="true" parallelProcessing="true">

<!-- tokeniznig and processing and inserting logic an be here-->

</camel:split>

</camel:threads>

</camel:route>

</camel:context>

I was able to acheive a 100000 order records insertion to mydql database in about 1 min 37 seconds.

sample order node is

<order>

<orderid>ordfc4d76bc-434d-46d8-967b-5ea3a209ab95</orderid>

<productid>prd104ac81f-b183-47b3-b758-283f4eb240f5</productid>

<productName>vRawRkwFqA</productName>

<productDescription>dbmgtnpXUrrXQPymxhcxJfAcfZanBgRlkGQtktVLwRDkSxSWoBYiVXtOECDQOOJfoqcBGNYcNOAVTMLouJlYslGYVOquVQfCfuM</productDescription>

<customerId>nxGYOiqakRmWv</customerId>

<firstName>qkwocXeflC</firstName>

<lastName>eyqhVMNyj</lastName>

</order>

Regards

Sundar M R

-

8. Re: Best Practices to insert millions of records (Batch Insert)

smunirat-redhat.com Jan 18, 2016 5:08 PM (in response to kkrishnashankar)Also you can use the splitter and aggregation eip like below.

<camel:split streaming="true">

<tokenize token="order" xml="true" />

<camel:unmarshal ref="orderConv"></camel:unmarshal>

<camel:process ref="converMap"></camel:process>

<camel:aggregate strategyRef="listStr"

completionSize="100000" parallelProcessing="true" >

<camel:correlationExpression>

<camel:constant>true</camel:constant>

</camel:correlationExpression>

<camel:to uri="sqlComponent:{{sql.insertNewRecord}}?batch=true" />

</camel:aggregate>

</camel:split>

Where your aggregation strategy can just be an extension to AbstractListAggregationStrategy Class , you can also enclose the entire split in threads component.

Hope this helps your case.

-

9. Re: Best Practices to insert millions of records (Batch Insert)

kkrishnashankar Jan 19, 2016 9:27 AM (in response to smunirat-redhat.com)Hello Sundar,

thanks for reply, sorry for delay in response (OOO).

Do you mean only it can be only achieved after enabling streaming and parallel processing.

Thanks,

Krishna.

-

10. Re: Best Practices to insert millions of records (Batch Insert)

smunirat-redhat.com Jan 20, 2016 9:39 AM (in response to kkrishnashankar)If you like to reduce the memory foot print for large files and order is not of importance then use streaming , parallel processing enables faster rate as the name would suggest , ...

-

11. Re: Best Practices to insert millions of records (Batch Insert)

kkrishnashankar Jan 20, 2016 10:54 AM (in response to smunirat-redhat.com)Hello Sundar,

Can you please share the codebase.

Appreciated your help

Thanks,

Krishna -

13. Re: Best Practices to insert millions of records (Batch Insert)

kkrishnashankar Jan 20, 2016 2:11 PM (in response to smunirat-redhat.com)thanks Sundar, let me check.

Revert on results and/or issues (if any)

-

14. Re: Best Practices to insert millions of records (Batch Insert)

kkrishnashankar Jan 20, 2016 3:03 PM (in response to smunirat-redhat.com)Is the code base backward compatibility for fuse 6.2.0

And other note I've noticed issue with POM.xml , getting validation error.