-

1. Re: Batch subsystem in console

jaikiran Feb 10, 2020 10:57 PM (in response to vokail)Do you mean there are jBeret (batch) jobs which are running on your WildFly setup which are no longer shown in the admin console? Maybe cfang might know about this?

-

-

-

4. Re: Batch subsystem in console

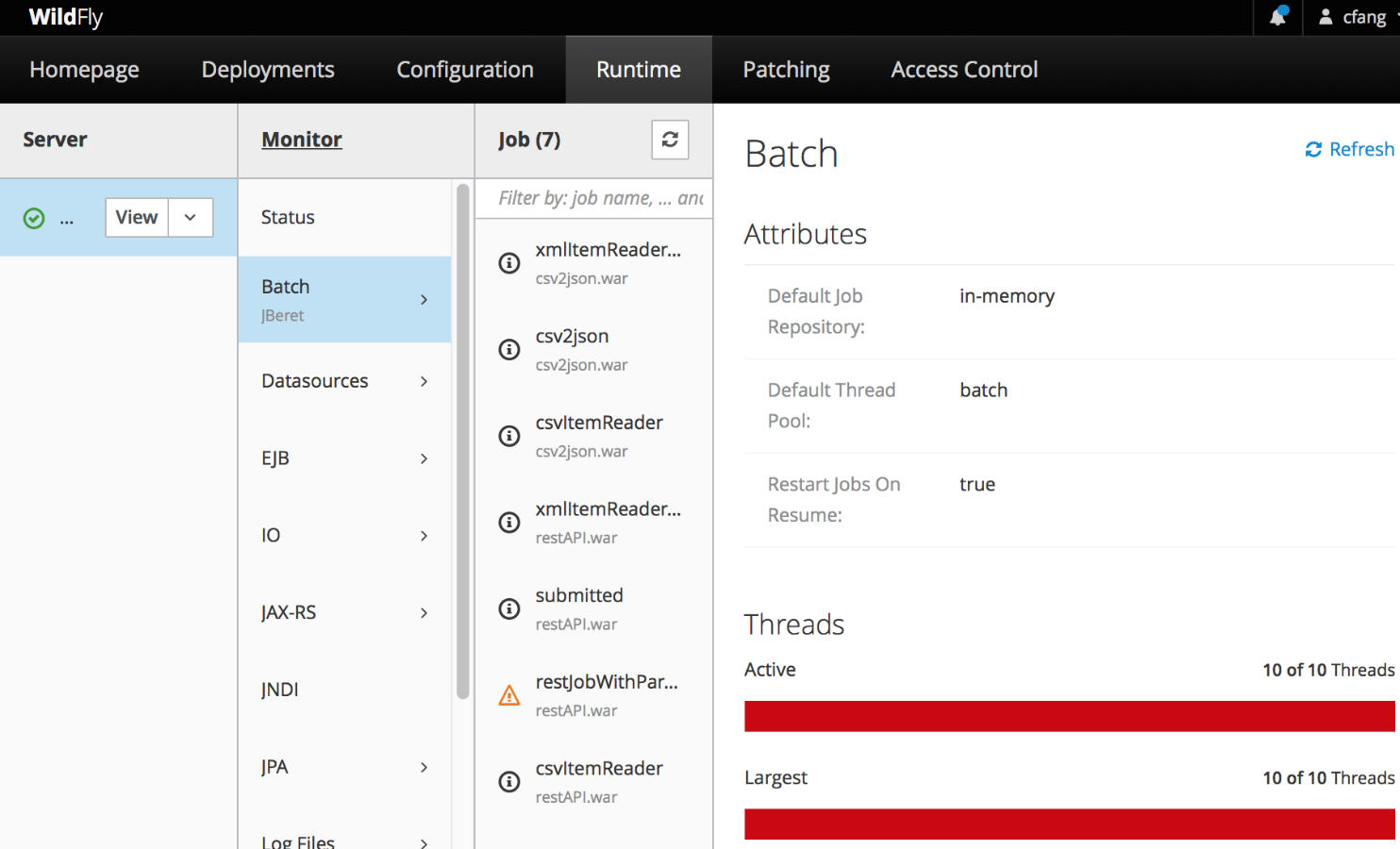

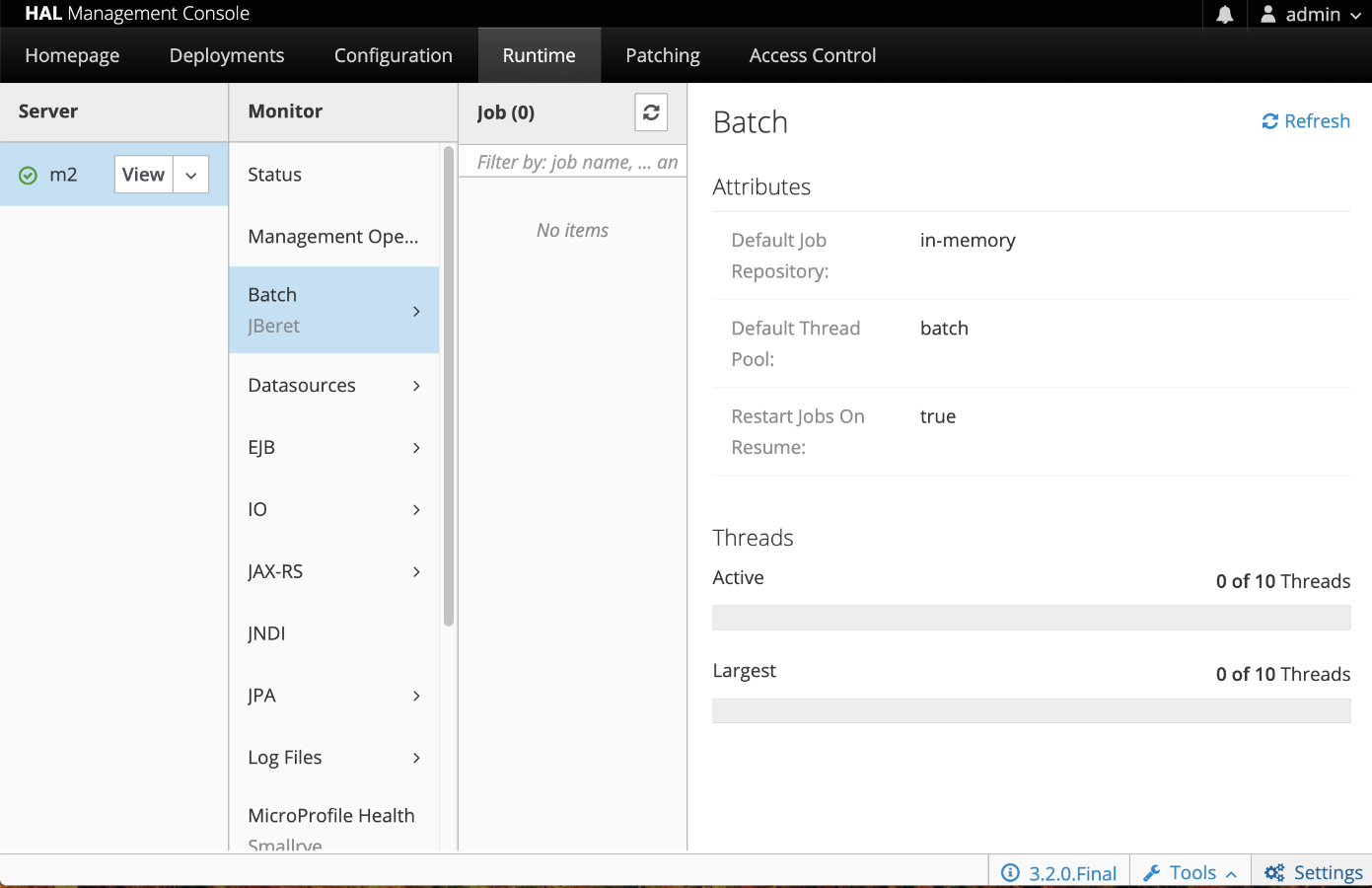

cfang Feb 17, 2020 11:57 AM (in response to vokail)1 of 1 people found this helpfulNo need to configure anything. The jobs column should be there in all cases. I've just tried WildFly 17.0.1.Final, and I was able to see the jobs column and batch runtime attributes on the right, with no batch application deployed. I'm using Firefox and Chrome. You may want to try different browsers/newer versions, or adjust font sizes and see if any difference.

-

5. Re: Batch subsystem in console

vokail Feb 18, 2020 3:51 AM (in response to cfang)I think I have some kind of problems on my local setup.

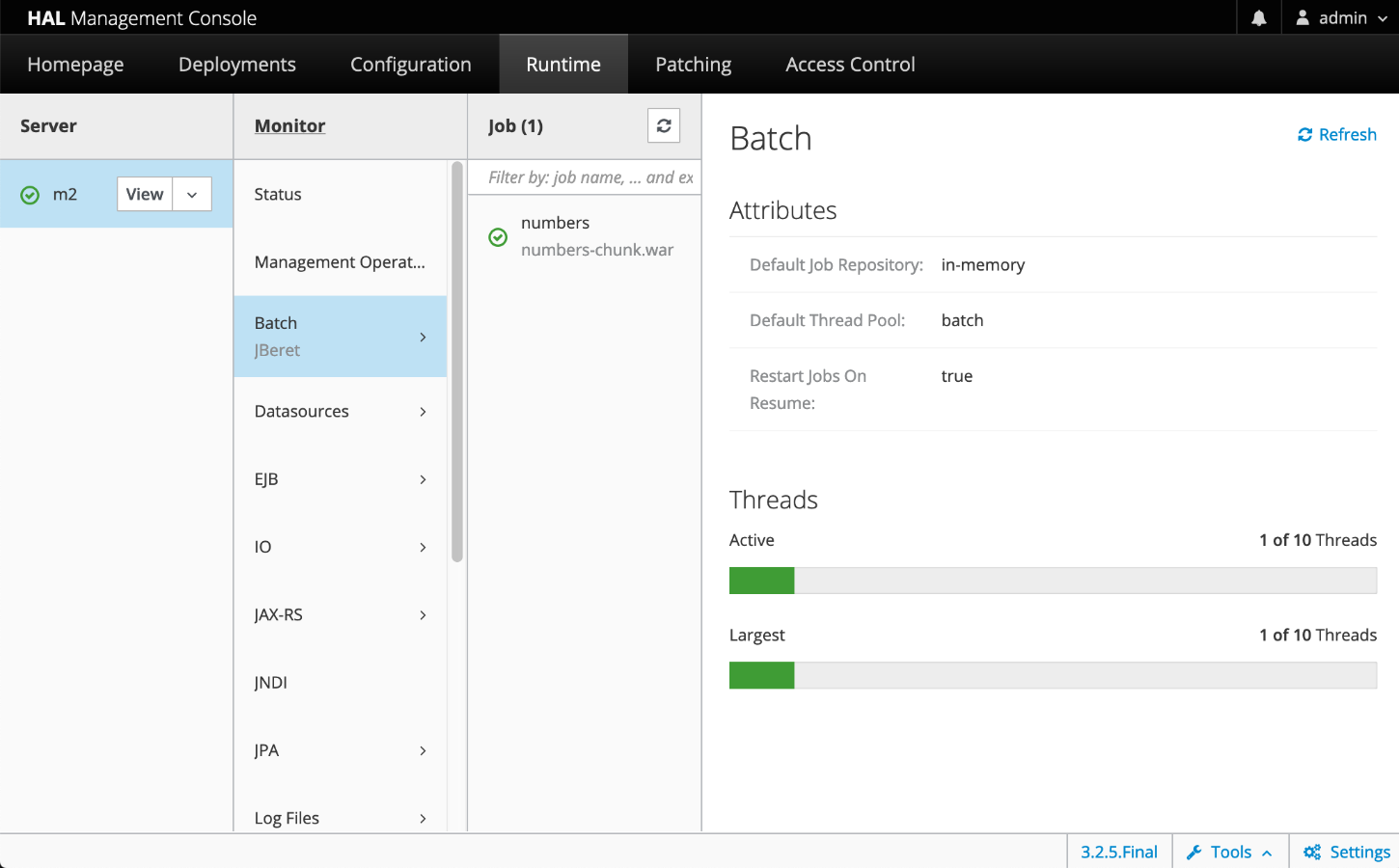

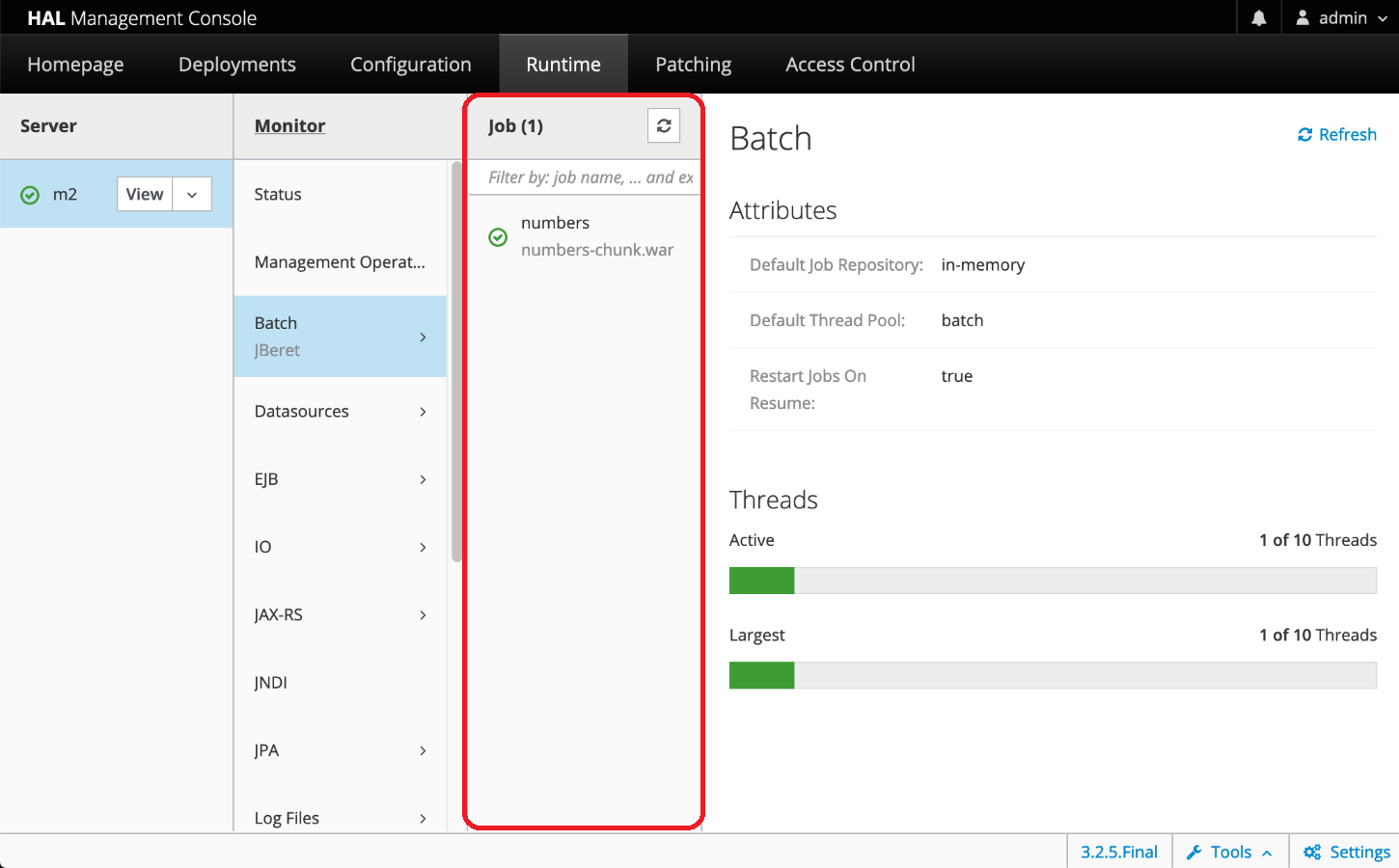

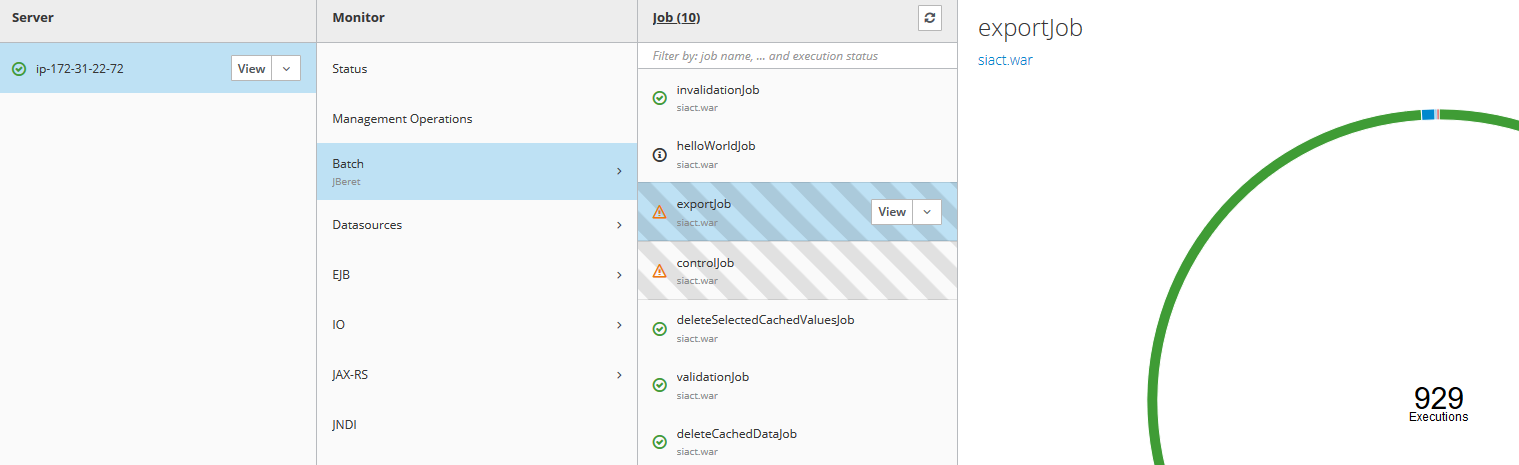

As reference on aws instance, I can see the job tab:

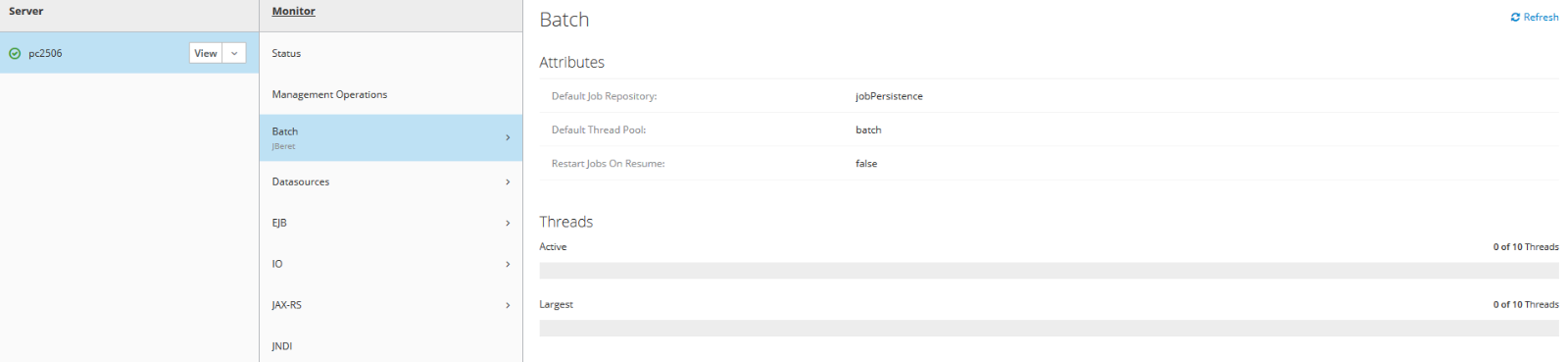

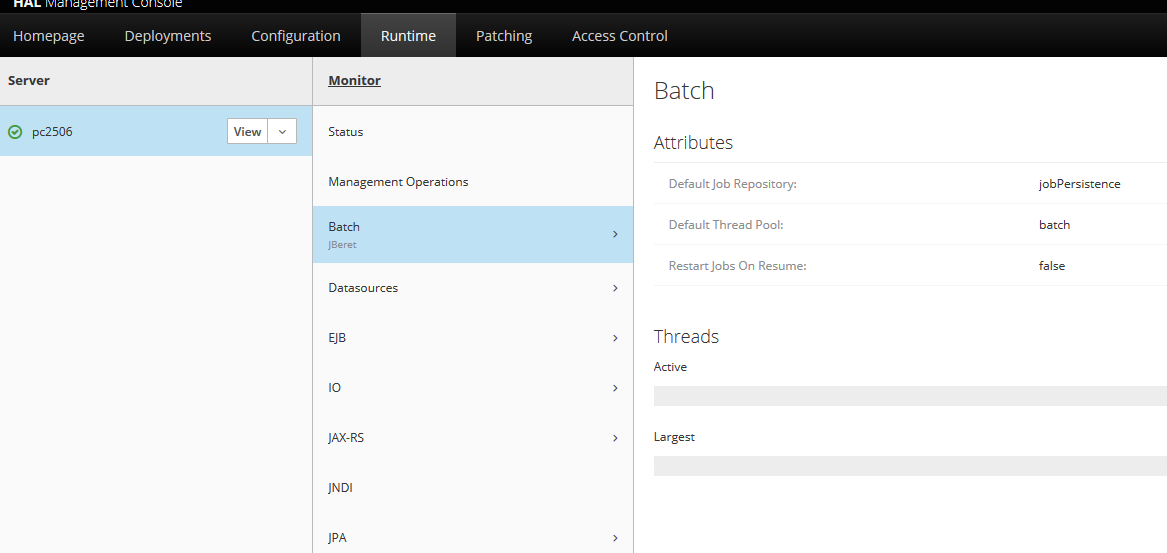

but on localhost no:

same wildfly instance.

I have another question: could be that on localhost I have a lot of jobs (over 5k on job_execution table, on postgres ) and takes a lot of times to load/process them?

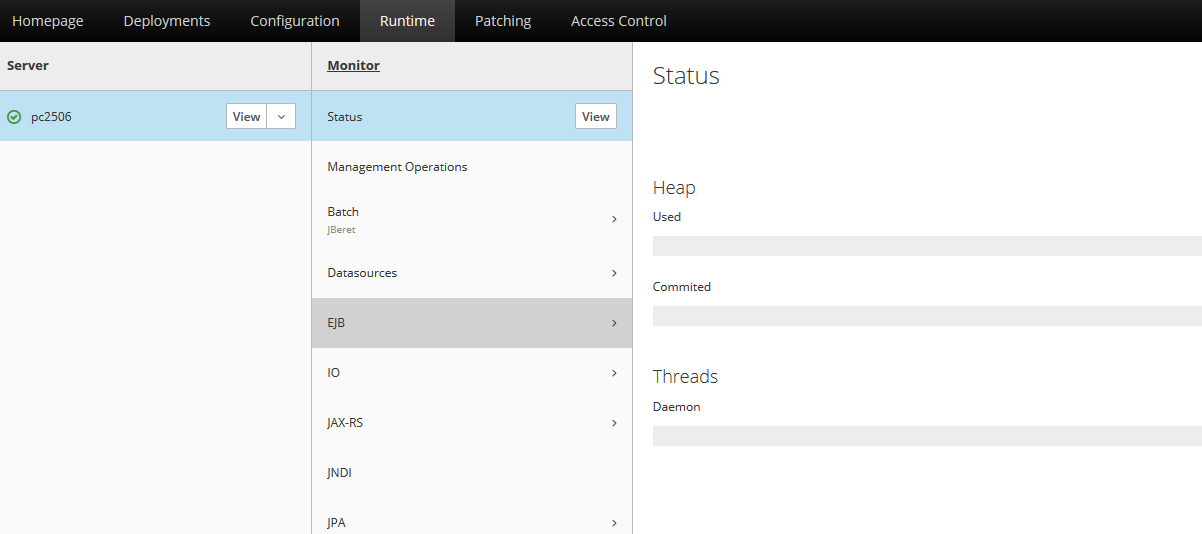

This would explain why on localhost also my application login is stopped and even status does not display nothing:

Maybe it's better if I open a new issue so devs can investigate on this ?

In wildfly logs, I can see also this, not sure if is related or not (first line is just reference, openend a Mongodb connection, then nothing for 12 minutes..):

09:34:30,073 INFO [org.mongodb.driver.connection] (EJB default - 1) Opened connection [connectionId{localValue:3, serverValue:135}] to localhost:27017

09:46:50,548 WARN [com.arjuna.ats.arjuna] (Transaction Reaper) ARJUNA012117: TransactionReaper::check timeout for TX 0:ffffc0a8a6e1:97f6705:5e4ba15a:2d in state RUN

09:48:00,189 WARN [com.arjuna.ats.arjuna] (Transaction Reaper Worker 0) ARJUNA012095: Abort of action id 0:ffffc0a8a6e1:97f6705:5e4ba15a:2d invoked while multiple threads active within it.

09:48:00,880 WARN [com.arjuna.ats.arjuna] (Transaction Reaper) ARJUNA012117: TransactionReaper::check timeout for TX 0:ffffc0a8a6e1:97f6705:5e4ba15a:2d in state CANCEL

09:49:39,425 WARN [com.arjuna.ats.arjuna] (Transaction Reaper) ARJUNA012378: ReaperElement appears to be wedged: java.util.Hashtable.values(Hashtable.java:763)

com.arjuna.ats.arjuna.coordinator.BasicAction.checkChildren(BasicAction.java:3397)

com.arjuna.ats.arjuna.coordinator.BasicAction.Abort(BasicAction.java:1667)

com.arjuna.ats.arjuna.coordinator.TwoPhaseCoordinator.cancel(TwoPhaseCoordinator.java:124)

com.arjuna.ats.arjuna.AtomicAction.cancel(AtomicAction.java:215)

com.arjuna.ats.arjuna.coordinator.TransactionReaper.doCancellations(TransactionReaper.java:381)

com.arjuna.ats.internal.arjuna.coordinator.ReaperWorkerThread.run(ReaperWorkerThread.java:78)

09:49:44,551 WARN [com.arjuna.ats.arjuna] (Transaction Reaper) ARJUNA012117: TransactionReaper::check timeout for TX 0:ffffc0a8a6e1:97f6705:5e4ba15a:2d in state CANCEL_INTERRUPTED

09:50:31,761 WARN [com.arjuna.ats.arjuna] (Transaction Reaper) ARJUNA012120: TransactionReaper::check worker Thread[Transaction Reaper Worker 0,5,main] not responding to interrupt when cancelling TX 0:ffffc0a8a6e1:97f6705:5e4ba15a:2d -- worker marked as zombie and TX scheduled for mark-as-rollback