WildFly 18 was successfully released in October, 2019. Among many features and improvements implemented in this release is more fine-grained configuration of ejb3 subsystem thread pool. In this blog post, I'll walk through this feature and its benefits to application and server configuration.

Comparison of EJB Thread Pool Behavior

A WildFly ejb thread pool consists of core threads and non-core threads. In previous versions (before WildFly 18), users can configure the maximum number of threads, but cannot configure the number of core threads, which is always set the the same value as maximum number of all threads. This deficiency is fixed in WildFly 18 with the ability to configure number of core threads and maximum threads independently. The following table illustrates key differences between previous and current versions of WildFly ejb thread pool:

| Before WildFly 18 | WildFly 18 and later |

|---|---|

| max-threads is configurable, but core-threads is not configurable and always equals to max-threads | core-threads and max-threads can be configured independently |

| Upon new request, new threads are created up to the limit of max-threads, even though idle threads are available | Available threads are reused as much as possible, without unnecessary creation of new threads |

| Idle threads are not timed out, and keepalive-timeout is ignored | Idle non-core threads can time out after keepalive-timeout duration |

| Incoming tasks are queued after core threads are used up | non-core threads are created and used after core threads are saturated to service new requests |

Common CLI Commands to Configure EJB Thread Pool

Users typically configure EJB thread pool through WildFly CLI or admin console. The following are some common tasks for managing ejb thread pool:

To start WildFly standalone server instance:

cd $JBOSS_HOME/bin ./standalone.sh

To start WildFly CLI program and connect to the target running WildFly server:

$JBOSS_HOME/bin/jboss-cli.sh --connect

Once the CLI program is started, you can then run CLI sub-commands in the CLI shell. To view the default ejb thread pool configuration:

/subsystem=ejb3/thread-pool=default:read-resource

{

"outcome" => "success",

"result" => {

"core-threads" => undefined,

"keepalive-time" => {

"time" => 60L,

"unit" => "SECONDS"

},

"max-threads" => 10,

"name" => "default",

"thread-factory" => undefined

}

}

To view default ejb thread pool configuration and its runtime metrics:

/subsystem=ejb3/thread-pool=default:read-resource(include-runtime, recursive=true)

{

"outcome" => "success",

"result" => {

"active-count" => 0,

"completed-task-count" => 640L,

"core-threads" => undefined,

"current-thread-count" => 4,

"keepalive-time" => {

"time" => 60L,

"unit" => "SECONDS"

},

"largest-thread-count" => 4,

"max-threads" => 10,

"name" => "default",

"queue-size" => 0,

"rejected-count" => 0,

"task-count" => 636L,

"thread-factory" => undefined

}

}

To configure the number of core threads in default thread pool:

/subsystem=ejb3/thread-pool=default:write-attribute(name=core-threads, value=5)

{"outcome" => "success"}

To read the number of core threads in default thread pool:

/subsystem=ejb3/thread-pool=default:read-attribute(name=core-threads)

{

"outcome" => "success",

"result" => 5

}

To set the idle timeout value for non-core threads to 5 minutes:

/subsystem=ejb3/thread-pool=default:write-attribute(name=keepalive-time, value={time=5, unit=MINUTES})

{"outcome" => "success"}

You can also set the time value alone while keeping time unit unchanged (or set time unit alone):

/subsystem=ejb3/thread-pool=default:write-attribute(name=keepalive-time.time, value=10)

{"outcome" => "success"}

Server Configuration File

WildFly configuration is saved in server configuration files, and the default standalone instance configuration is located at $JBOSS_HOME/standalone/configuration/standalone.xml. The following is a snippet of relevant to ejb thread pool configuration:

<subsystem xmlns="urn:jboss:domain:ejb3:6.0">

<async thread-pool-name="default"/>

<timer-service thread-pool-name="default" ...>

...

</timer-service>

<remote thread-pool-name="default" ...>

...

</remote>

<thread-pools>

<thread-pool name="default">

<max-threads count="10"/>

<core-threads count="5"/>

<keepalive-time time="10" unit="minutes"/>

</thread-pool>

</thread-pools>

</subsystem>

In the above configurtion, a thread-pool named default is defined to contain a maximum of 10 thread in total, a maximum of 5 core-threads, and non-core threads are eligible for removal after being idle for 10 minutes. This thread pool is used by the ejb container to process async and remote EJB invocation, and timer callback.

Testing EJB Thread Pool

To see EJB thread pool in action, simply deploy a jar file containing the following bean class to WildFly, and check the application logs, which shows the name of the thread processing timer timeout event.

package test;

import javax.ejb.*;

import java.util.logging.Logger;

@Startup

@Singleton

public class ScheduleSingleton {

private Logger log = Logger.getLogger(ScheduleSingleton.class.getSimpleName());

@Schedule(second="*/1", minute="*", hour="*", persistent=false)

public void timer1(Timer t) {

log.info("timer1 fired at 1 sec interval ");

}

@Schedule(second="*/2", minute="*", hour="*", persistent=false)

public void timer2(Timer t) {

log.info("timer2 fired at 2 sec interval ");

}

}

The above bean class defines a singleton session bean with eager initialization. 2 automatic calendar-based timers are created upon application initialization. timer1 will fire every 1 second, and timer2 will fire every 2 seconds.

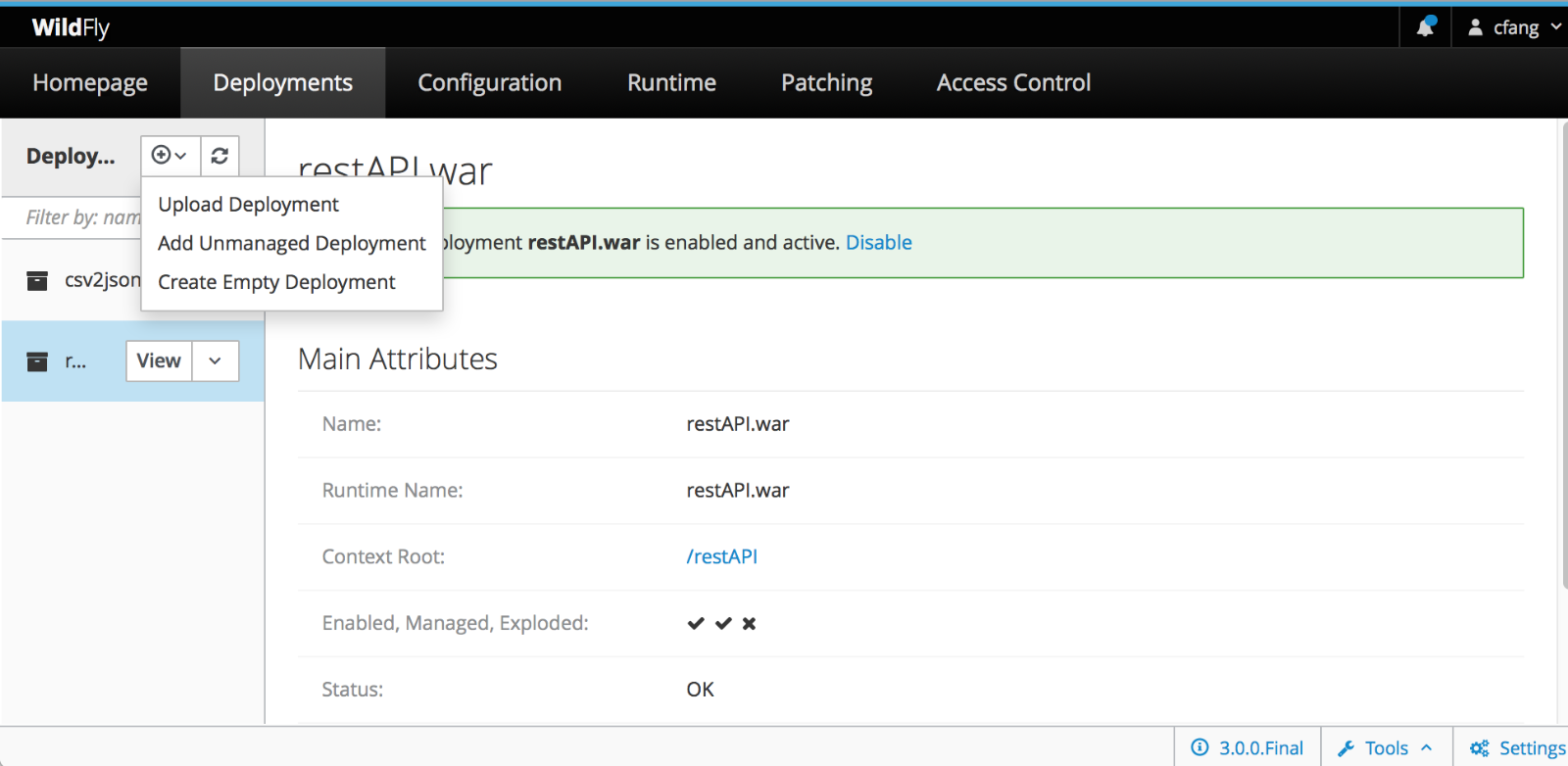

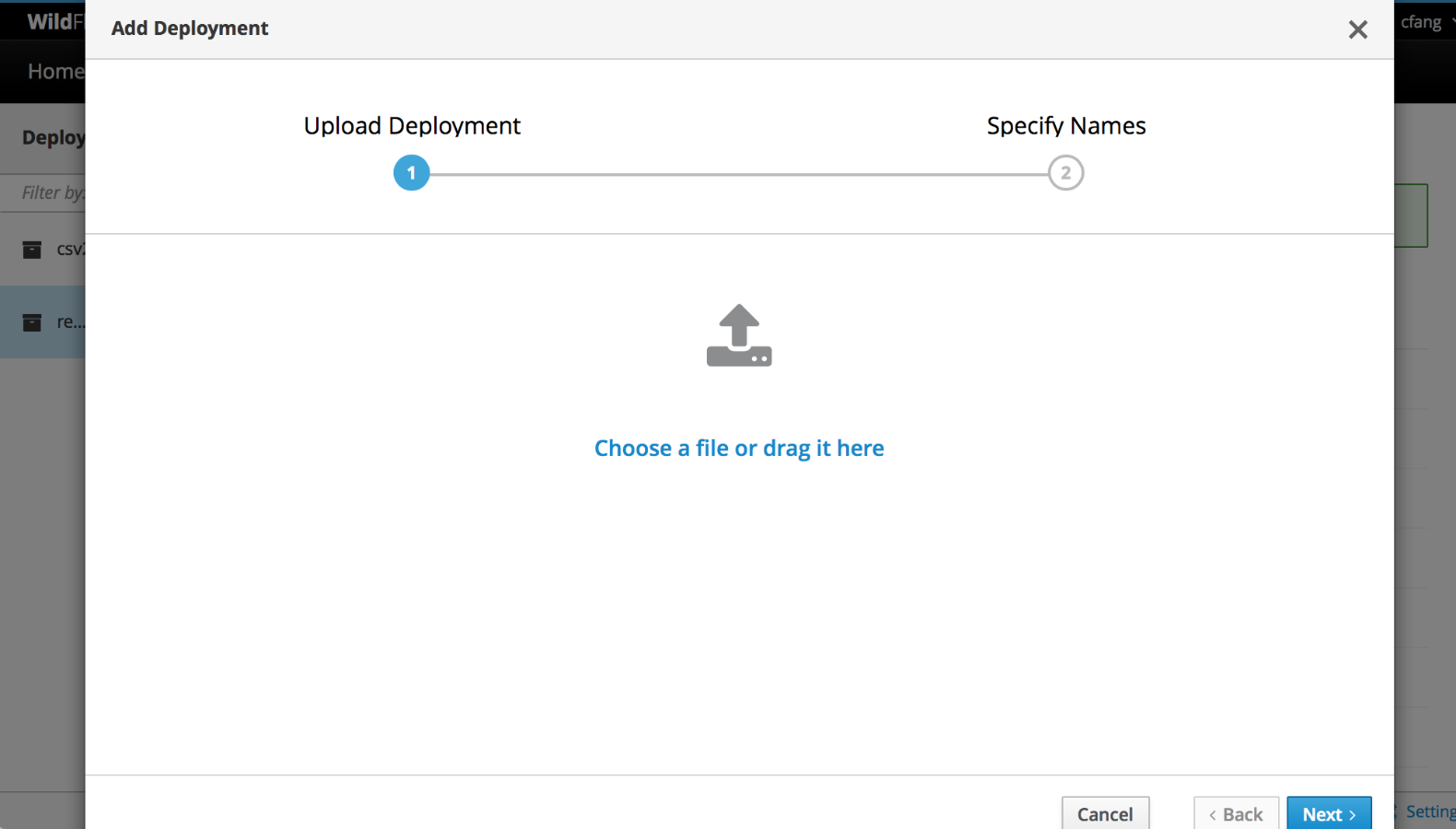

After compiling and packaing this bean class to a jar file, deploy it to running WildFly server:

$JBOSS_HOME/bin/jboss-cli.sh -c "deploy --force schedule-timer-not-persistent.jar"

Once deployed, the following output will logged in console and server log file:

09:00:30,272 INFO [org.jboss.as.server] (management-handler-thread - 1) WFLYSRV0010: Deployed "schedule-timer-not-persistent.jar" (runtime-name : "schedule-timer-not-persistent.jar") 09:00:31,025 INFO [ScheduleSingleton] (EJB default - 1) timer1 fired at 1 sec interval 09:00:32,007 INFO [ScheduleSingleton] (EJB default - 1) timer2 fired at 2 sec interval 09:00:32,009 INFO [ScheduleSingleton] (EJB default - 2) timer1 fired at 1 sec interval 09:00:33,006 INFO [ScheduleSingleton] (EJB default - 2) timer1 fired at 1 sec interval 09:00:34,007 INFO [ScheduleSingleton] (EJB default - 1) timer1 fired at 1 sec interval 09:00:34,012 INFO [ScheduleSingleton] (EJB default - 2) timer2 fired at 2 sec interval

(EJB default - 1) and (EJB default - 2) indicates thread 1 and 2 from EJB thread pool named default are processing timer timeout event. Althogh there are infinite timeout events, only those 2 core threads are needed to handle these tasks.

You can also verify this behavior by looking at thread pool runtime metrics:

/subsystem=ejb3/thread-pool=default:read-resource(include-runtime, recursive=true)

{

"outcome" => "success",

"result" => {

"completed-task-count" => 88L,

"core-threads" => 5,

"current-thread-count" => 2,

"largest-thread-count" => 2,

"max-threads" => 10,

...

}

}

Notice that only 2 core threads are created with no non-core threads, to complete 88 timeout tasks.

Ater testing, undeploy the ejb application so that no more timers are scheduled:

$JBOSS_HOME/bin/jboss-cli.sh -c "undeploy schedule-timer-not-persistent.jar"

More Resources:

I hope this post is useful to your application development and management with WildFly. Comments, feedback and contribution are always welcome and appreciated. For more information, please check out following links and other resources from WildFly, jboss and Red Hat.

WildFly project source code repo

WildFly Community Documentation